Ensuring the lawfulness of the data processing - Defining a legal basis

07 June 2024

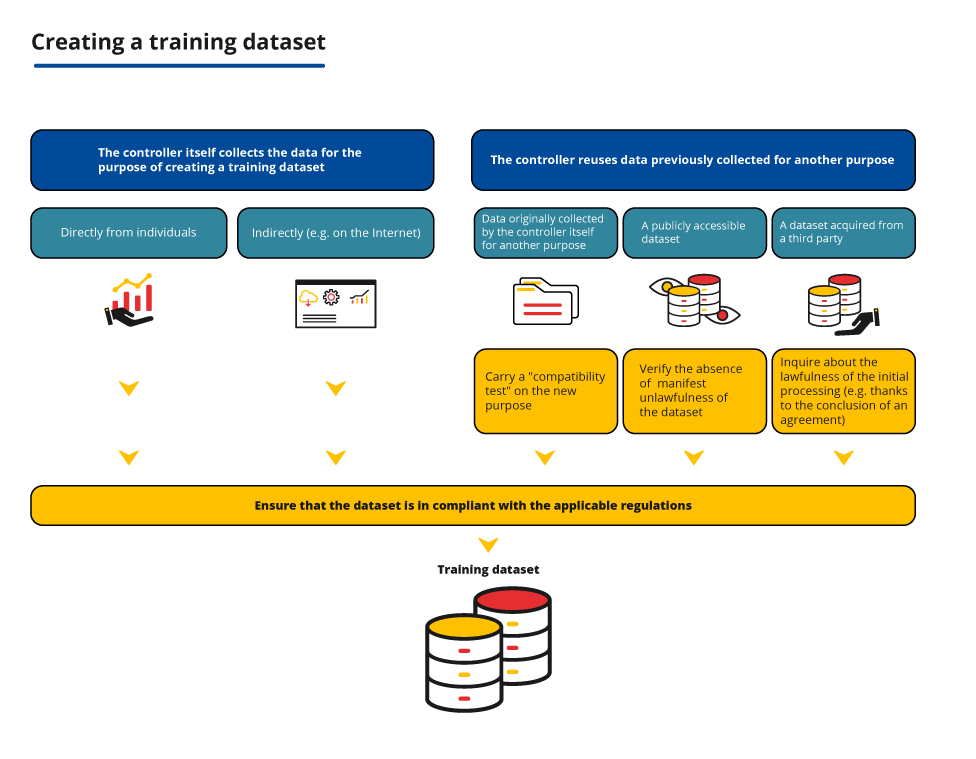

An organisation that wishes to build a training dataset containing personal data and then use it to develop an AI system must ensure that the processing is lawful. The CNIL helps you determine your obligations based on your responsibility and the means of collecting or reusing the data.

The controller must in all cases define a legal basis and carry out, depending on the method of collection or re-use of the data, certain additional verifications.

There are several ways to build a training dataset, which can be used cumulatively:

- data is collected directly from individuals;

- data is collected from open sources on the Internet for this purpose;

- data was initially collected for another purpose by the controller itself (e.g. in the context of providing a service to its users) or by another controller. This involves taking additional precautions.

Define a legal basis

The principle

Like any personal data processing, the creation and use of a training dataset containing personal data can only be implemented if it corresponds to one of the “legal bases” provided for in the GDPR.

The legal basis is what gives an organisation the right to process personal data. The choice of the legal basis is therefore an essential first step to ensure compliance of the processing. Depending on the legal basis, the obligations of the organisation and the rights of individuals may vary.

The most relevant legal bases for training an algorithm are detailed below.

In practice

The determination of the legal basis must be carried out in a manner appropriate to the situation and the type of treatment. In order to establish a dataset for the training of an AI system, the following legal bases may be envisaged in particular:

The legal basis for consent

To be valid, the consent of the data subjects must meet four cumulative criteria: it must be freely given, specific, informed and unambiguous. The controller must be able to demonstrate the validity of the use of this legal basis by ensuring that each of these conditions, specifically defined by the GDPR, is met.

When creating a dataset for, an organisation must ensure the validity of the consent collected.

Beyond the obligations of transparency, a certain amount of information must be provided to the data subjects before they consent, in order to enable them to make informed decisions and to allow them to withdraw their consent.

Consent must relate to a specific purpose (see how-to sheet 2 on the definition of the purpose).

The freedom of consent implies, in principle, the possibility for data subjects to give their consent in a granular way, where there are different purposes.

The freedom of consent may also be impacted in the case of an imbalance of power in the relationship between the data subject and the controller, especially if the controller is a public authority or an employer.

It does not seem possible to obtain valid consent in some cases. This is often the case when the controller collects data accessible online or reuses an dataset available online, especially given the lack of contact with the data subjects and the difficulty in identifying them. In these cases, the controller must rely on a more appropriate legal basis.

There may also be difficulties related to the right to withdraw consent, for example due to technical obstacles to the identification of data subjects. If it is not possible for the controller to guarantee the possibility of exercising this right, it is recommended to rely on another legal basis.

The legal basis for the legitimate interest

The controller may rely on its legitimate interest provided that it complies with the following conditions:

- the legitimacy of the interest pursued by the controller. For example, the interest of an organisation in developing a model for the commercialisation of an AI system or in order to contribute to the improvement of scientific knowledge, for example by publishing the tools developed (code, model, experimentation protocol, etc.) and research results.

- the necessity of the data processing. For example, processing for the purpose of creating up a training dataset containing images of people may be considered necessary for the interests of an organisation wishing to develop a pose estimation system, where anonymous or synthetic data are not sufficient.

- the absence of a disproportionate impact on data subjects’ interests and rights and freedoms, taking into account their reasonable expectations. Balancing of the rights and interests at hand depends on the specific characteristics of the processing and in particular on the safeguards implemented to ensure the best possible balance between those interests and to limit the impact of the processing on the data subjects.

More often than not, creating a training dataset whose use is lawful can be considered legitimate. However, an analysis is necessary to determine whether the use of personal data for this purpose does not disproportionately infringe the privacy of the data subjects, even when the data is not nominative. To guarantee that its processing is proportionate, the controller may implement measures such as: pseudonymisation of the data, ensuring the absence of sensitive data, defining selection criteria to limit the collection to the relevant and necessary data, etc.

An organisation creates a training dataset by collecting comments made public and freely accessible by online users on forums, blogs and websites. The purpose of this processing is to design an AI system to assess and predict the appreciation of works of art by the general public. In this case, its interest in developing and possibly marketing an AI system may be considered legitimate. The collection of feedback on the works may be considered necessary for the development of the model, especially given the amount of training data required . It should be noted that the legal basis of legitimate interest gives data subjects the right to object to the processing of their data (for reasons relating to their particular situation).

The legal basis of the task carried out in the public interest

The possibility of relying on the legal basis of the “task carried out in the public interest” implies:

- that the task of processing is provided for in a normative text applicable to the controller;

- that the use of the data makes it possible to carry out this task specifically, in a relevant and appropriate manner.

The Pôle d’Expertise de la Régulation Numérique (PEReN) is authorised to reuse, under certain conditions, publicly accessible data from certain platforms in order to carry out experiments aimed in particular at designing technical tools for the regulation of online platform operators, in accordance with Article 36 of Law No 2021-1382 of 25 October 2021 and Decree No 2022-603 of 21 April 2022.

For more information:

- Use case sheet 4 of the guide on the re-use of publicly accessible data (open data)

- What legal basis for research processing?

The legal basis of the contract

The legal basis of the contract could be used for the creation of a training dataset for an AI system provided that a valid contract is concluded between the controller and the data subject and that the processing is objectively necessary for its performance.

Contracts concluded for this purpose must comply with other applicable rules, such as labour law or intellectual property.

Sensitive data: prohibited processing, with exceptions

Sensitive data is a particular category of personal data defined in Article 9 of the GDPR. Sensitive data includes, for instance, data revealing the alleged racial or ethnic origin of the data subjects, or biometric data for the purpose of uniquely identifying a natural person, such as a facial template.

The GDPR prohibits the processing of such data, except in the cases listed in Article 9.2. of the GDPR. These exceptions include in particular:

- processing operations for which data subjects gave their explicit consent (active, explicit and preferably written, freely given, specific and informed);

- processing of personal data which is manifestly made public by the data subject;

In its Guidelines on targeting users of social networks, the EDPB provides a list of factors to be taken into account in determining whether the data is manifestly made public: the default setting of the social media platform, the nature of the platform, the accessibility of the page concerned, the visibility of the information about its public nature, whether the data subject has published the data himself or whether it has been published by a third party or deduced.

It is important to check whether the data subject wished, explicitly and by a clear positive act, on the basis of an informed setting, to make his or her personal data accessible to the general public or, on the contrary, to a more or less limited number of selected persons (ECJ, 4 July 2023, Meta Platforms, C ‑252/21). - processing necessary for reasons of substantial public interest, on the basis of EU or Member State law;

- processing operations necessary for the purpose of scientific research on the basis of European Union or Member State law.

Particular attention should be paid to the collection of sensitive data when using web scraping tools that involve the processing of large volumes of data. The controller has to implement measures to automatically exclude the collection of irrelevant sensitive data, in particular by applying filters to exclude the collection of certain categories of data or to exclude certain sites that gather sensitive data by nature. If, despite the measures taken, the organisation processes incidentally and residually sensitive data that it had not sought to collect, it is not considered illegal. In particular, the Court of Justice of the European Union held that that prohibition applies to the operator of a search engine “in the context of its responsibilities, powers and possibilities” (ECJ, Grand Chamber, 24 September 2019, GC and Others, C-136/17). On the other hand, if the organisation comes to know that it is processing sensitive data, it has to proceed, as far as possible, to its immediate and automated deletion.

Please note:

- A how-to sheet on bias management will be published at a later date. It will clarify the possibility of processing sensitive data for the purpose of detecting and correcting bias in the training dataset.

- The CNIL is currently conducting work on the issue of AI in health, which will be published later.

The basis of the legal obligation

This legal basis may seem relevant in some cases for data processing carried out in the deployment phase, since an AI system can sometimes be used by the controller to comply with a legal obligation (provided that it requires the processing of personal data). It is however more difficult to rely on this basis for its development.

Indeed, in order to rely on this legal basis, the processing must be necessary to meet a specific legal obligation to which the controller is subject. The text on which it is based must at least define the purpose of the processing and may frame it more precisely (in particular through the types of data to be processed, the limitation of the purposes or other conditions to be respected). The more precise the legal obligation, the easier it is to justify why it requires the processing of personal data.

However, since legal obligations are generally not specific enough to provide for the development of AI systems, it will most often be necessary to rely on another legal basis to develop such systems.